|

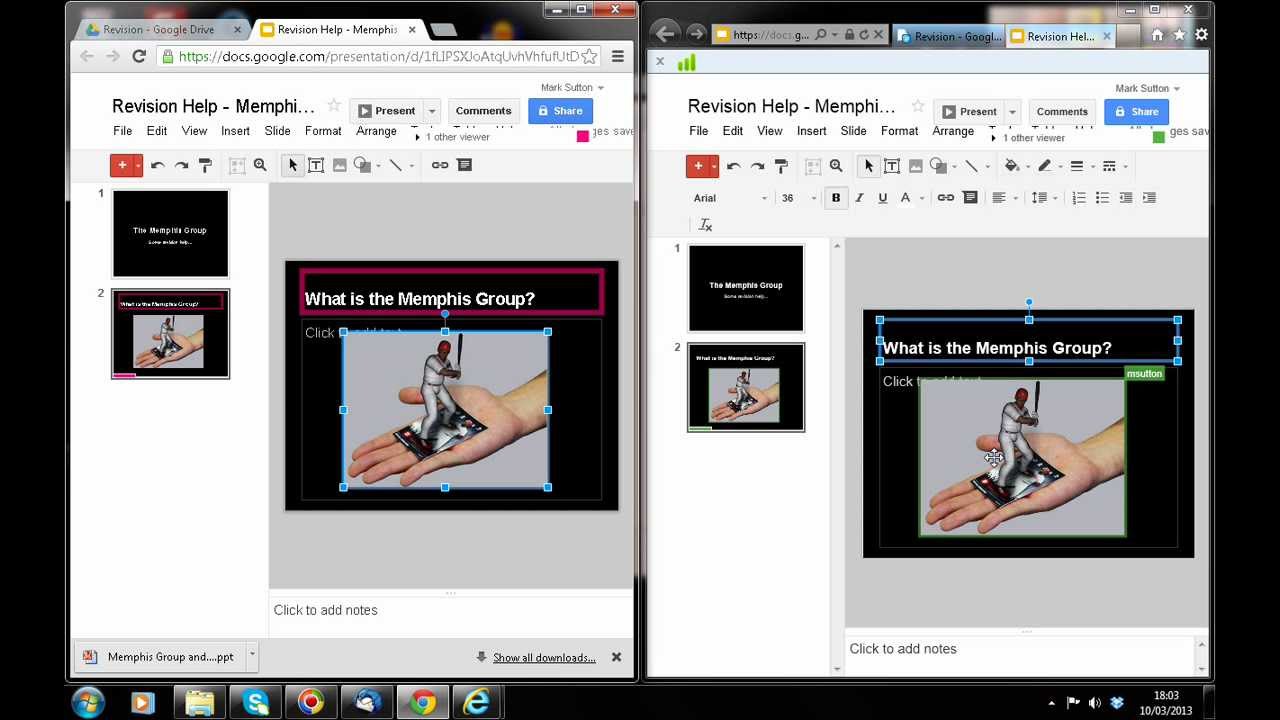

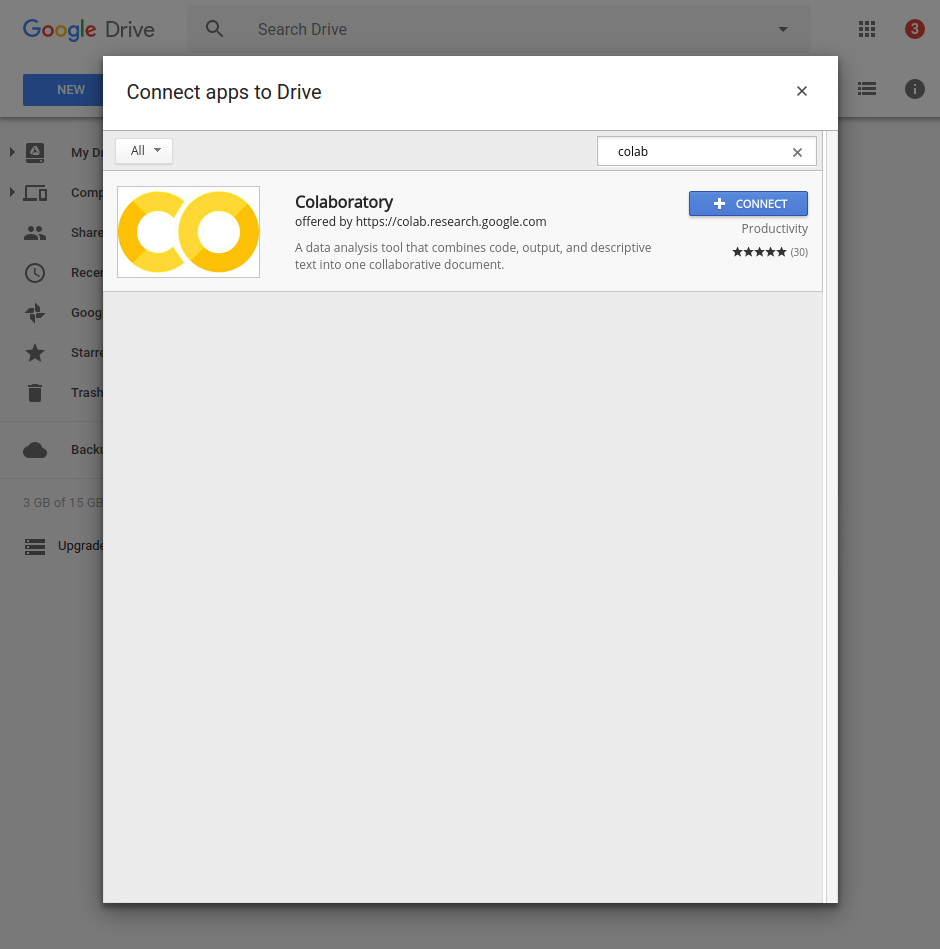

11/17/2023 0 Comments Collaboratory google driveFrom the tabs at the top of the file explorer, select a source and Visiting theĬolab interface after initial use will result in a file explorer modalĪppearing. Colab interfaceĮxisting files from Google Drive, GitHub, and local hardware. Page or by right clicking on a file and selecting Open with > Colaboratoryįrom the file's context menu. Open with > Colaboratory from the button found at the top of the resulting Open files by either doubling clicking on them and selecting

The 'My Drive' folder of your Google Drive when you start working with Colab.Ĭolab files can be identified by a yellow 'CO' symbol and '.ipynb' fileĮxtension. Notebooks created from the Colab interface willĭefault to a folder called 'Colab Notebooks' which is automatically added to Notebooks created in Google Drive will exist in the folder they Google Drive depending on where notebooks files If you have interacted with Colab previously, visiting theĪbove linked site will provide you with a file explorer where youĬan start a new file using the dropdown menu at the bottom of the window.Įxisting notebook files (.ipynb) can be opened from Google Drive and the ColabĬolab notebooks can exist in various folders in.Right click in a folder and select More > Colaboratory from the context.Not in same cell as where we untar)Īs above command unmounts the drive. (please run this command in separate cell. This might take couple of hours depending if data is in GBs otherwise it's very fast. So we have to explicitly synch the collab and drive: drive.flush_and_unmount() At this point, all the files extracted might be in the collaboratory vm and not fully synched to drive.So I simply used this command and it solved my problem: !unrar x So as per original question here, I also thought may be, google collab and google drive are not getting synched.īut when I looked at it closely, I found that google-collab is extracting files at /content folder (no matter from whichever directory you fire unrar command) I could see that file is getting extracted but I couldn't find extracted files in google drive.

Then I tried to extract this in collab using: // mount google driveĭrive.mount('gdrive', force_remount=True) I tried to extract using cloud converter but it is paid, if your file size is bigger than 1GB I have a very big file in google drive around (6 GB) (upload file to google drive) And finally I am able to resolve this (with just one line of code). !rm /gdrive/My\ Drive/Temp/ML/Final/dataset/ import osĭrive.mount('/gdrive', force_remount = True)ĭir_path = "/gdrive/My Drive/Temp/ML/Final/dataset/"įor block in r.iter_content(chunk_size = 4096):įor member in tqdm(iterable = tar.getmembers(), total = len(tar.getmembers())): I'm using this fairly simple piece of code. Is this some kind of bug in the Google Drive's sync process that is causing this? Or am I doing something in an incorrect way? Any help or advice would be appreciated. Running a couple of lines of code reveal that only 10-12 directories have been updated correctly (out of 36) and the rest are empty.

But even after waiting for quite a while (almost a day) the Google Drive is not getting updated properly. I understand that syncing the Colab's Virtual Machine memory with the Google Drive needs some time. After executing the code snippet attached below, I can see that the archive has been extracted correctly and I can see all the files in the Virtual Machine disk (needless to say, there are 100K+ files as expected). The Tar archive is fairly large, but from an ML dataset POV it's pretty small. I'm trying to download and extract the Google Speech Commands Dataset using a Google Colab notebook.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed